MORE INFORMATION ABOUT CLEANLINESS AND HYGIENE

what is Hand washing ?

Hand washing for hand hygiene is t he act of

cleaning one's hands

with or without the use of water

or another liquid, or with the use

of soap or e.g. ash, for the

purpose of removing soil,

dirt, and/or microorganisms.

he act of

cleaning one's hands

with or without the use of water

or another liquid, or with the use

of soap or e.g. ash, for the

purpose of removing soil,

dirt, and/or microorganisms.

Medical hand hygiene pertains to the hygiene practices related to the administration of medicine and medical care that prevents or minimizes disease and the spreading of disease. The main medical purpose of washing hands is to cleanse the hands of pathogens (including bacteria or viruses) and chemicals which can cause personal harm or disease. This is especially important for people who handle food or work in the medical field, but it is also an important practice for the general public. People can become infected with respiratory illnesses such as influenza or the common cold, for example, if they don't wash their hands before touching their eyes, nose, or mouth. Indeed, the Centers for Disease Control and Prevention (CDC) has Stated: "It is well documented that one of the most important measures for preventing the spread of pathogens is effective hand washing." As a general rule, handwashing protects people poorly or not at all from droplet- and airborne diseases, such as measles, chickenpox, influenza, and tuberculosis. It protects best against diseases transmitted through fecal-oral routes (such as many forms of stomach flu) and direct physical contact (such as impetigo).

Symbolic hand washing, using water only to wash hands, is a part of ritual handwashing featured in many religions, including Bahá'í Faith, Hinduism, and tevilah and netilat yadayim in Judaism. Similar to these are the practices of Lavabo in Christianity, Wudu in Islam and Misogi in Shintō.

Substances used

Soap and detergents

Removal of microorganisms from skin is enhanced by the addition of soaps or detergents to water. The main action of soaps and detergents is to reduce barriers to solution, and increase solubility. Water is an inefficient skin cleanser because fats and proteins, which are components of organic soil, are not readily dissolved in water. Cleansing is, however, aided by a reasonable flow of water.

Water temperature

Hot water that is comfortable for washing han

ds is not hot enough to kill bacteria. Bacteria grow much faster at body temperature (37 C). However, warm, soapy water is more effective than cold, soapy water at removing the natural oils on your hands which hold soils and bacteria. Contrary to popular belief however, scientific studies have shown that using warm water has no effect on reducing the microbial load on hands.

Solid soap

Solid soap, because of its reusable nature, may hold bacteria acquired from previous uses. Yet, it is unlikely that any bacteria are transferred to users of the soap, as the bacteria are rinsed off with the foam.

Antibacterial soap

Antibacterial soaps have been heavily promoted to a health-conscious public. To date, there is no evidence that using recommended antiseptics or disinfectants selects for antibiotic-resistant organisms in nature. However, antibacterial soaps contain common antibacterial agents such as Triclosan, which has an extensive list of resistant strains of organisms. So, even if antibiotic resistant strains aren't selected for by antibacterial soaps, they might not be as effective as they are marketed to be.

A comprehensive analysis from the University of Oregon School of Public Health indicated that plain soaps are as effective as consumer-grade anti-bacterial soaps containing triclosan in preventing illness and removing bacteria from the hands.

Hand antiseptic

A hand sanitizer or hand antiseptic is a non-water-based hand hygiene agent. In the late 1990s and early part of the 21st century, alcohol rub non-water-based hand hygiene agents (also known as alcohol-based hand rubs, antiseptic hand rubs, or hand sanitizers) began to gain popularity. Most are based on isopropyl alcohol or ethanol formulated together with a thickening agent such as Carbomer into a gel, or a humectant such as glycerin into a liquid, or foam for ease of use and to decrease the drying effect of the alcohol.

Hand sanitizers containing a minimum of 60 to 95% alcohol are efficient germ killers. Alcohol rub sanitizers kill bacteria, multi-drug resistant bacteria (MRSA and VRE), tuberculosis, and some viruses (including HIV, herpes, RSV, rhinovirus, vaccinia, influenza, and hepatitis) and fungus. Alcohol rub sanitizers containing 70% alcohol kill 99.97% (3.5 Log reduction, similar to 35 Decibel reduction) of the bacteria on hands 30 seconds after application and 99.99% to 99.999% (4-5 log reduction) of the bacteria on hands 1 minute after application.

Hand sanitizers are most effective against bacteria and less effective against some viruses. Alcohol-based hand sanitizers are almost entirely ineffective against norovirus or Norwalk type viruses, the most common cause of contagious gastroenteritis.

The CDC recommends hand washing over hand sanitizer rubs, particularly when hands are visibly dirty. The increasing use of these agents is based on their ease of use and rapid killing activity against micro-organisms; however, they should not serve as a replacement for proper hand washing unless soap and water are unavailable.

Frequent use of alcohol-based hand sanitizers can cause dry skin unless emollients and/or skin moisturizers are added to the formula. The drying effect of alcohol can be reduced or eliminated by adding glycerin and/or other emollients to the formula. In clinical trials, alcohol-based hand sanitizers containing emollients caused substantially less skin irritation and dryness than soaps or antimicrobial detergents. Allergic contact dermatitis, contact urticaria syndrome or hypersensitivity to alcohol or additives present in alcohol hand rubs rarely occur. The lower tendency to induce irritant contact dermatitis became an attraction as compared to soap and water hand washing.

Despite their effectiveness, non-water agents do not cleanse the hands of organic material, but simply disinfect them. It is for this reason that hand sanitizers are not as effective as soap and water at preventing the spread of many pathogens, since the pathogens still remain on the hands.

Alcohol-free hand sanitizer efficacy is heavily dependent on the ingredients and formulation, and historically has significantly under-performed alcohol and alcohol rubs. More recently, formulations that use benzalkonium chloride have been shown to have persistent and cumulative antimicrobial activity after application, unlike alcohol, which has been shown to decrease in efficacy after repeated use, probably due to progressive adverse skin reactions. Purell has been shown to fail to meet the FDA 21 CFR 333.470 performance standards for health-care personnel antiseptic hand washes, not just as a consequence of the decrease in effectiveness with repeated use, but due to a lack of persistence in antimicrobial activity after application and the decrease in effectiveness with heavy soil loads. In the same study, HandClens was shown to meet and exceed the FDA performance standards.

Ash or mud

Many people in low-income communities cannot afford soap and use ash or soil instead. Ash or soil may be more effective than water alone, but may be less effective than soap. Evidence quality is poor. One concern is that if the soil or ash is contaminated with microorganisms it may increase the spread of disease rather than decrease it. Like soap, ash is also a disinfecting agent (alkaline). WHO recommended ash or sand as alternative to soap when soap is not available.

Techniques

Soap and water

In overview, one must use soap and if warm running water if possible, and wash all skin and nails thoroughly. However, ash can substitute soap (see substances above) and cold water can also be used.

First one should rinse hands with warm water, keeping hands below wrists and forearms, to prevent contaminated water from moving from the hands to the wrists and arms. The warm water helps to open pores, which helps with the removal of microorganisms, without removing skin oils. One should use five milliliters of liquid soap, to completely cover the hands, and rub wet, soapy hands together, outside the running water, for at least 20 seconds.

The most commonly missed areas are the thumb, the wrist, the areas between the fingers, and under fingernails. Artificial nails and chipped nail polish harbor microorganisms.

Then one should rinse thoroughly, from the wrist to the fingertips to ensure that any microorganisms fall off the skin rather than onto skin.

One should use a paper towel to turn off the water. Dry hands and arms with a clean towel, disposable or not, and use a paper towel to open the door.

Moisturizing lotion is often recommended to keep the hands from drying out; Dry skin can lead to skin damage which can increase the risk for the transmission of infection.

Various low-cost options can be made to facilitate handwashing where tap-water and/or soap is not available e.g. pouring water from a hanging a jerrycan or gourd with suitable holes and/or using ash if needed in developing countries (see Substance section too).

Hand antiseptics

Enough hand antiseptic or alcohol rub must be used to thoroughly wet or cover both hands. The front and back of both hands and between and the ends of all fingers are rubbed for approximately 30 seconds until the liquid, foam or gel is dry. As well as finger tips must be washed well too rubbing them in both palms alternatively.

Drying

Effective drying of the hands is an essential part of the hand hygiene process, but there is some debate over the most effective form of drying in washrooms. A growing volume of research suggests paper towels are much more hygienic than the electric hand dryers found in many washrooms.

In 2008, a study was conducted by the University of Westminster, London, and sponsored by the paper-towel industry the European Tissue Symposium, to compare the levels of hygiene offered by paper towels, warm-air hand dryers and the more modern jet-air hand dryers. The key findings were:

- after washing and drying hands with the warm-air dryer, the total number of bacteria was found to increase on average on the finger pads by 194% and on the palms by 254%

- drying with the jet-air dryer resulted in an increase on average of the total number of bacteria on the finger pads by 42% and on the palms by 15%

- after washing and drying hands with a paper towel, the total number of bacteria was reduced on average on the finger pads by up to 76% and on the palms by up to 77%.

The scientists also carried out tests to establish whether there was the potential for cross contamination of other washroom users and the washroom environment as a result of each type of drying method. They found that:

- the jet-air dryer, which blows air out of the unit at claimed speeds of 400 mph, was capable of blowing micro-organisms from the hands and the unit and potentially contaminating other washroom users and the washroom environment up to 2 metres away.

- use of a warm-air hand dryer spread micro-organisms up to 0.25 metres from the dryer.

- paper towels showed no significant spread of micro-organisms.

In 2005, in a study conducted by TUV Produkt und Umwelt, different hand drying methods were evaluated. The following changes in the bacterial count after drying the hands were observed:

|

Drying method |

Effect on bacterial count |

|

Paper towels and roll |

Decrease of 24% |

|

Hot-air dryer |

Increase of 12% |

provides a directory of case studies on hand dryers vs. paper towels provided by major hand dryer manufacturers such as Excel Dryer.

Medical use

Medical hand-washing is for a minimum of 15 seconds, using generous amounts of soap and water or gel to lather and rub each part of the hands. Hands should be rubbed together with digits interlocking. If there is debris under fingernails, a bristle brush may be used to remove it. Since germs may remain in the water on the hands, it is important to rinse well and wipe dry with a clean towel. After drying, the paper towel should be used to turn off the water (and open any exit door if necessary). This avoids re-contaminating the hands from those surfaces.

The purpose of hand-washing in the health-care setting is to remove pathogenic microorganisms ("germs") and avoid transmitting them. The New England Journal of Medicine reports that a lack of hand-washing remains at unacceptable levels in most medical environments, with large numbers of doctors and nurses routinely forgetting to wash their hands before touching patients. One study showed that proper hand-washing and other simple procedures can decrease the rate of catheter-related bloodstream infections by 66 percent.

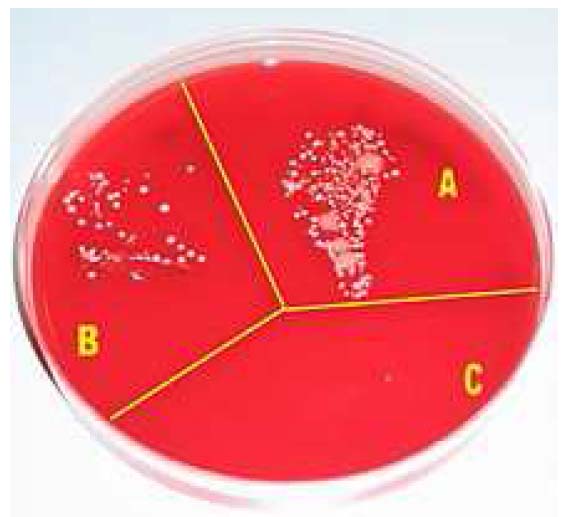

Microbial growth on a cultivation plate without procedures

(A), after washing hands with soap (B) and after disinfection with

alcohol (C).

The World Health Organization has published a sheet demonstrating standard hand-washing and hand-rubbing in health-care sectors. The draft guidance of hand hygiene by the organization can also be found at its website for public comment. A relevant review was conducted by Whitby et al. Commercial devices can measure and validate hand hygiene, if demonstration of regulatory compliance is required.

The World Health Organization has "Five Moments" for washing hands

- before patient care

- after environmental contact

- after exposure to blood/body fluids

- before an aseptic task, and

- after patient care.

The addition of antiseptic chemicals to soap ("medicated" or "antimicrobial" soaps) confers killing action to a hand-washing agent. Such killing action may be desired prior to performing surgery or in settings in which antibiotic-resistant organisms are highly prevalent.

To 'scrub' one's hands for a surgical operation, it is necessary to have a tap that can be turned on and off without touching it with the hands, some chlorhexidine or iodine wash, sterile towels for drying the hands after washing, and a sterile brush for scrubbing and another sterile instrument for cleaning under the fingernails. All jewelry should be removed. This procedure requires washing the hands and forearms up to the elbow, usually 2–6 minutes. Long scrub-times (10 minutes) are not necessary. When rinsing, water on the forearms must be prevented from running back to the hands. After hand-washing is completed, the hands are dried with a sterile cloth and a surgical gown is donned.

Medical hand-washing became mandatory long after Hungarian physician Ignaz Semmelweis discovered its effectiveness in preventing disease in a hospital environment. There are electronic devices that provide feedback to remind hospital staff to wash their hands when they forget. One study has found decreased infection rates with their use.

Hand washing with wipes

Hand washing using hand sanitizing wipes is also recommended by CDC as a convenient alternative during traveling in the absence of soap and water in certain health care settings.

Effectiveness

This hygienic behavior has been shown to cut the number of child deaths from diarrhea (the second leading cause of child deaths) by almost half and from pneumonia (the leading cause of child deaths) by one-quarter. There are five critical times in washing hands with soap and/or using of a hand antiseptic related to fecal-oral transmission: after using a bathroom (private or public), after changing a diaper, before feeding a child, before eating and before preparing food or handling raw meat, fish, or poultry, or any other situation leading to potential contamination and see below. To reduce the spread of germs, it is also better to wash the hands and/or use a hand antiseptic before and after tending to a sick person.

For control of staphylococcal infections in hospitals, it has been found that the greatest benefit from hand-cleansing came from the first 20% of washing, and that very little additional benefit was gained when hand cleansing frequency was increased beyond 35%. Washing with plain soap results in more than triple the rate of bacterial infectious disease transmitted to food as compared to washing with antibacterial soap. Comparing hand-rubbing with alcohol-based solution with handwashing with antibacterial soap for a median time of 30 seconds each showed that the alcohol hand-rubbing reduced bacterial contamination 26% more than the antibacterial soap. But soap and water is more effective than alcohol-based hand rubs for reducing H1N1 influenza A virus and Clostridium difficile spores from hands.

Religion

In symbolic hand washing using water only to wash hands is a part of ritual handwashing as a feature of many religions, including Bahá'í Faith, Hinduism and tevilah and netilat yadayim in Judaism. Similar to these are the practices of Lavabo in Christianity, Wudu in Islam and Misogi in Shintō.

Hand washing behavior

The phrase "washing one's hands of" something, means declaring one's unwillingness to take responsibility for the thing or share complicity in it. In the New Testament book of Matthew, verse 27:24 gives an account of Pontius Pilate washing his hands of the decision to crucify Jesus: "When Pilate saw that he could prevail nothing, but that rather a tumult was made, he took water, and washed his hands before the multitude, saying, 'I am innocent of the blood of this just person.

Tsukubai, provided at a Japanese temple

for symbolic hand washing and mouth rinsing

In Shakespeare's Macbeth, Lady Macbeth begins to compulsively wash her hands in an attempt to cleanse an imagined stain, representing her guilty conscience regarding crimes she had committed and induced her husband to commit.

It has also been found that people, after having recalled or contemplated unethical acts, tend to wash hands more often than others, and tend to value hand washing equipment more. Furthermore, those who are allowed to wash their hands after such a contemplation are less likely to engage in other "cleansing" compensatory actions, such as volunteering.

Excessive hand washing is commonly seen as a symptom of obsessive-compulsive disorder (OCD).

Pros and cons of hand washing practices

Pros

- helps minimize the spread of influenza

- diarrhea prevention

- avoiding respiratory infections

- a preventive measure for infant deaths at their home-birth-deliveries

- improved hand washing practices have been shown to lead to small improvements in the length growth in children under five years of age

Cons

- prone to skin damage

what is Food safety ?

Food Safety is a scientific discipline describing handling, preparation, and storage of food in ways that prevent food borne illness. This includes a number of routines that should be followed to avoid potentially severe health hazards. The tracks within this line of thought are safety between industry and the market and then between the market and the consumer. In considering industry to market practices, food safety considerations include the origins of food including the practices relating to food labeling, food hygiene, food additives and pesticide residues, as well as policies on biotechnology and food and guidelines for the management of governmental import and export inspection and certification systems for foods. In considering market to consumer practices, the usual thought is that food ought to be safe in the market and the concern is safe delivery and preparation of the food for the consumer.

Food can transmit disease from person to person as well as serve as a growth medium for bacteria that can cause food poisoning. In developed countries there are intricate standards for food preparation, whereas in lesser developed countries the main issue is simply the availability of adequate safe water, which is usually a critical item. In theory, food poisoning is 100% preventable. The five key principles of food hygiene, according to WHO, are:

1. Prevent contaminating food with pathogens spreading from people, pets, and pests.

2. Separate raw and cooked foods to prevent contaminating the cooked foods.

3. Cook foods for the appropriate length of time and at the appropriate temperature to kill pathogens.

4. Store food at the proper temperature.

5. Do use safe water and cooked materials.

ISO 22000

ISO 22000 is a standard developed by the International Organization for Standardization dealing with food safety. This is a general derivative of ISO 9000. ISO 22000 standard: The ISO 22000 international standard specifies the requirements for a food safety management system that involves interactive communication, system management, prerequisite programs, HACCP principles.

Incidence

A 2003 World Health Organization (WHO) report concluded that about 30% of reported food poisoning outbreaks in the WHO European Region occur in private homes. According to the WHO and CDC, in the USA alone, annually, there are 76 million cases of foodborne illness leading to 325,000 hospitalizations and 5,000 deaths.

Regulatory agencies

WHO and FAO

In 2003, the WHO and FAO published the Codex Alimentarius which serves as a guideline to food safety.

However, according to Unit 04 - Communication of Health & Consumers Directorate-General of the European Commission (SANCO): "The Codex, while being recommendations for voluntary application by members, Codex standards serve in many cases as a basis for national legislation. The reference made to Codex food safety standards in the World Trade Organizations' Agreement on Sanitary and Phytosanitary measures (SPS Agreement) means that Codex has far reaching implications for resolving trade disputes. WTO members that wish to apply stricter food safety measures than those set by Codex may be required to justify these measures scientifically." So, an agreement made in 2003, signed by all member States, inclusive all EU, in the codex Stan Codex 240 – 2003 for coconut milk, sulphite containing additives like E223 and E 224 are allowed till 30 mg/kg, does NOT mean, they are allowed into the EU, see RASFF entries from Denmark: 2012.0834; 2011.1848; en 2011.168, “sulphite unauthorised in coconut milk from Thailand “. Same for polysorbate E 435: see 2012.0838 from Denmark, unauthorised polysorbates in coconut milk and, 2007.AIC from France. Only for the latter the EU amended its regulations with (EU) No 583/2012 per 2 July 2012 to allow this additive, already used for decades and absolutely necessary.

Australia

Food Standards Australia New Zealand requires all food businesses to implement food safety systems. These systems are designed to ensure food is safe to consume and halt the increasing incidence of food poisoning, and they include basic food safety training for at least one person in each business. Food safety training is delivered in various forms by Registered Training Organizations (RTOs), after which staff are issued a nationally recognised unit of competency code on their certificate. Basic food safety training includes:

- Understanding the hazards associated with the main types of food and the conditions to prevent the growth of bacteria which can cause food poisoning and to prevent illness.

- Potential problems associated with product packaging such as leaks in vacuum packs, damage to packaging or pest infestation, as well as problems and diseases spread by pests.

- Safe food handling. This includes safe procedures for each process such as receiving, re-packing, food storage, preparation and cooking, cooling and re-heating, displaying products, handling products when serving customers, packaging, cleaning and sanitizing, pest control, transport and delivery. Also covers potential causes of cross contamination.

- Catering for customers who are particularly at risk of food-borne illness, as well as those with allergies or intolerance.

- Correct cleaning and sanitizing procedures, cleaning products and their correct use, and the storage of cleaning items such as brushes, mops and cloths.

- Personal hygiene, hand washing, illness, and protective clothing.

New legislation means that people responsible for serving unsafe food may be liable for heavy fines.

China

Food safety is a growing concern in Chinese agriculture. The Chinese government oversees agricultural production as well as the manufacture of food packaging, containers, chemical additives, drug production, and business regulation. In recent years, the Chinese government attempted to consolidate food regulation with the creation of the State Food and Drug Administration in 2003, and officials have also been under increasing public and international pressure to solve food safety problems. However, it appears that regulations are not well known by the trade. Labels used for "green" food, "organic" food and "pollution-free" food are not well recognized by traders and many are unclear about their meaning. A survey by the World Bank found that supermarket managers had difficulty in obtaining produce that met safety requirements and found that a high percentage of produce did not comply with established standards.

Traditional marketing systems, whether in China or the rest of Asia, presently provide little motivation or incentive for individual farmers to make improvements to either quality or safety as their produce tends to get grouped together with standard products as it progresses through the marketing channel. Direct linkages between farmer groups and traders or ultimate buyers, such as supermarkets, can help avoid this problem. Governments need to improve the condition of many markets through upgrading management and reinvesting market fees in physical infrastructure. Wholesale markets need to investigate the feasibility of developing separate sections to handle fruits and vegetables that meet defined safety and quality standards.

European Union

The Parliament of the European Union (EU) makes legislation in the form of directives and regulations, many of which are mandatory for member States and which therefore must be incorporated into individual countries' national legislation. As a very large organisation that exists to remove barriers to trade between member States, and into which individual member States have only a proportional influence, the outcome is often seen as an excessively bureaucratic 'one size fits all' approach. However, in relation to food safety the tendency to err on the side of maximum protection for the consumer may be seen as a positive benefit. The EU parliament is informed on food safety matters by the European Food Safety Authority.

Individual member States may also have other legislation and controls in respect of food safety, provided that they do not prevent trade with other States, and can differ considerably in their internal structures and approaches to the regulatory control of food safety.

France

Agence nationale de sécurité sanitaire de l'alimentation, de l'environnement et du travail (anses) is a French governmental agency dealing with food safety.

Germany

The Federal Ministry of Food, Agriculture and Consumer Protection (BMELV) is a Federal Ministry of the Federal Republic of Germany. History: Founded as Federal Ministry of Food, Agriculture and Foresting in 1949, this name did not change until 2001. Then the name changed to Federal Ministry of Consumer Protection, Food and Agriculture. At the 22nd of November 2005, the name got changed again to its current State: Federal Ministry of Food, Agriculture and Consumer Protection. The reason for this last change was that all the resorts should get equal ranking which was achieved by sorting the resorts alphabetically. Vision: A balanced and healthy diet with safe food, distinct consumer rights and consumer information for various areas of life, and a strong and sustainable agriculture as well as perspectives for our rural areas are important goals of the Federal Ministry of Food, Agriculture and Consumer Protection (BMELV). The Federal Office of Consumer Protection and Food Safety is under the control of the Federal Ministry of Food, Agriculture and Consumer Protection. It exercises several duties, with which it contributes to safer food and thereby intensifies health-based consumer protection in Germany. Food can be manufactured and sold within Germany without a special permission, as long as it does not cause any damage on consumers’ health and meets the general standards set by the legislation. However, manufacturers, carriers, importers and retailers are responsible for the food they pass into circulation. They are obliged to ensure and document the safety and quality of their food with the use of in-house control mechanisms.

Hong Kong

In Hong Kong SAR, the Centre for Food Safety is in charge of ensuring food sold is safe and fit for consumption.

India

Food Safety and Standards Authority of India, established under the Food Safety and Standards Act, 2006, is the regulating body related to food safety and laying down of standards of food in India.

New Zealand

The New Zealand Food Safety Authority (NZFSA), or Te Pou Oranga Kai O Aotearoa is the New Zealand government body responsible for food safety. NZFSA is also the controlling authority for imports and exports of food and food-related products. The NZFSA as of 2012 is now a division of the Ministry for Primary Industries (MPI) and is no longer its own organization.

Pakistan

Pakistan does not have an integrated legal framework but has a set of laws, which deals with various aspects of food safety. These laws, despite the fact that they were enacted long time ago, have tremendous capacity to achieve at least minimum level of food safety. However, like many other laws, these laws remain very poorly enforced. There are four laws that specifically deal with food safety. Three of these laws directly focus issues related to food safety, while the fourth, the Pakistan Standards and Quality Control Authority Act, is indirectly relevant to food safety.

The Pure Food Ordinance 1960 consolidates and amends the law in relation to the preparation and the sale of foods. All provinces and some northern areas have adopted this law with certain amendments. Its aim is to ensure purity of food being supplied to people in the market and, therefore, provides for preventing adulteration. The Pure Food Ordinance 1960 does not apply to cantonment areas. There is a separate law for cantonments called "The Cantonment Pure Food Act, 1966". There is no substantial difference between the Pure Food Ordinance 1960 and The Cantonment Pure Food Act. Even the rules of operation are very much similar.

Pakistan Hotels and Restaurant Act, 1976 applies to all hotels and restaurants in Pakistan and seeks to control and regulate the rates and standard of service(s) by hotels and restaurants. In addition to other provisions, under section 22(2), the sale of food or beverages that are contaminated, not prepared hygienically or served in utensils that are not hygienic or clean is an offense. There are no express provisions for consumer complaints in the Pakistan Restaurants Act, 1976, Pakistan Penal Code, 1860 and Pakistan Standards and Quality Control Authority Act, 1996. The laws do not prevent citizens from lodging complaints with the concerned government officials; however, the consideration and handling of complaints is a matter of discretion of the officials.

South Korea

Korea Food & Drug Administration

Korea Food & Drug Administration (KFDA) is working for food safety since 1945. It is part of the Government of South Korea.

IOAS-Organic Certification Bodies Registered in KFDA: "Organic" or related claims can be labelled on food products when organic certificates are considered as valid by KFDA. KFDA admits organic certificates which can be issued by 1) IFOAM (International Federation of Organic Agriculture Movement) accredited certification bodies 2) Government accredited certification bodies – 328 bodies in 29 countries have been registered in KFDA.

Food Import Report: According to Food Import Report, it is supposed to report or register what you import. Competent authority is as follows:

|

Product |

Authority |

|

Imported Agricultural Products, Processed Foods, Food Additives, Utensils, Containers & Packages or Health Functional Foods |

KFDA (Korea Food and Drug Administration) |

|

Imported Livestock, Livestock products (including Dairy products) |

NVRQS (National Veterinary Research and Quarantine Service) |

|

Packaged meat, milk & dairy products (butter, cheese), hamburger patties, meat ball and other processed products which are stipulated by Livestock Sanitation Management Act |

NVRQS (National Veterinary Research and Quarantine Service) |

|

Imported Marine products; fresh, chilled, frozen, salted, dehydrated, eviscerated marine produce which can be recognized its characteristics |

NFIS (National Fisheries Products Quality Inspection Service) |

National Institute of Food and Drug Safety Evaluation

National Institute of Food and Drug Safety Evaluation (NIFDS) is functioning as well. The National Institute of Food and Drug Safety Evaluation is a national organization for toxicological tests and research. Under the Korea Food & Drug Administration, the Institute performs research on toxicology, pharmacology, and risk analysis of foods, drugs, and their additives. The Institute strives primarily to understand important biological triggering mechanisms and improve assessment methods of human exposure, sensitivities, and risk by (1) conducting basic, applied, and policy research that closely examines biologically triggering harmful effects on the regulated products such as foods, food additives, and drugs, and operating the national toxicology program for the toxicological test development and inspection of hazardous chemical substances assessments. The Institute ensures safety by investigation and research on safety by its own researchers, contract research by external academicians and research centers.

United Kingdom

In the UK the Food Standards Agency is an independent government department responsible for food safety and hygiene across the UK. They work with businesses to help them produce safe food, and with local authorities to enforce food safety regulations. In 2006 food hygiene legislation changed and new requirements came into force. The main requirement resulting from this change is that if you own or run a food business in the UK, you must have a documented Food Safety Management System, which is based on the principles of Hazard Analysis Critical Control Point HACCP.

United States

The US food system is regulated by numerous federal, State and local officials. It has been criticized as lacking in "organization, regulatory tools, and not addressing food borne illness."

Federal level regulation

The Food and Drug Administration publishes the Food Code, a model set of guidelines and procedures that assists food control jurisdictions by providing a scientifically sound technical and legal basis for regulating the retail and food service industries, including restaurants, grocery stores and institutional foodservice providers such as nursing homes. Regulatory agencies at all levels of government in the United States use the FDA Food Code to develop or update food safety rules in their jurisdictions that are consistent with national food regulatory policy. According to the FDA, 48 of 56 States and territories, representing 79% of the U.S. population, have adopted food codes patterned after one of the five versions of the Food Code, beginning with the 1993 edition.

In the United States, federal regulations governing food safety are fragmented and complicated, according to a February 2007 report from the Government Accountability Office. There are 15 agencies sharing oversight responsibilities in the food safety system, although the two primary agencies are the U.S. Department of Agriculture (USDA) Food Safety and Inspection Service (FSIS), which is responsible for the safety of meat, poultry, and processed egg products, and the Food and Drug Administration (FDA), which is responsible for virtually all other foods.

The Food Safety and Inspection Service has approximately 7,800 inspection program personnel working in nearly 6,200 federally inspected meat, poultry and processed egg establishments. FSIS is charged with administering and enforcing the Federal Meat Inspection Act, the Poultry Products Inspection Act, the Egg Products Inspection Act, portions of the Agricultural Marketing Act, the Humane Slaughter Act, and the regulations that implement these laws. FSIS inspection program personnel inspect every animal before slaughter, and each carcass after slaughter to ensure public health requirements are met. In fiscal year (FY) 2008, this included about 50 billion pounds of livestock carcasses, about 59 billion pounds of poultry carcasses, and about 4.3 billion pounds of processed egg products. At U.S. borders, they also inspected 3.3 billion pounds of imported meat and poultry products.

Industry pressure

There have been concerns over the efficacy of safety practices and food industry pressure on U.S. regulators. A study reported by Reuters found that "the food industry is jeopardizing U.S. public health by withholding information from food safety investigators or pressuring regulators to withdraw or alter policy designed to protect consumers". A survey found that 25% of U.S. government inspectors and scientists surveyed have experienced during the past year corporate interests forcing their food safety agency to withdraw or to modify agency policy or action that protects consumers. Scientists have observed that management undercuts field inspectors who stand up for food safety against industry pressure. According to Dr. Dean Wyatt, a USDA veterinarian who oversees federal slaughter house inspectors, "Upper level management does not adequately support field inspectors and the actions they take to protect the food supply. Not only is there lack of support, but there's outright obstruction, retaliation and abuse of power."

State and local regulation

A number of U.S. States have their own meat inspection programs that substitute for USDA inspection for meats that are sold only in-State. Certain State programs have been criticized for undue leniency to bad practices.

However, other State food safety programs supplement, rather than replace, Federal inspections, generally with the goal of increasing consumer confidence in the State's produce. For example, State health departments have a role in investigating outbreaks of food-borne disease bacteria, as in the case of the 2006 outbreak of Escherichia coli O157:H7 (a pathogenic strain of the ordinarily harmless bacteria, E. coli ) from processed spinach. Health departments also promote better food processing practices to eliminate these threats.

In addition to the US Food and Drug Administration, several States that are major producers of fresh fruits and vegetables (including California, Arizona and Florida) have their own State programs to test produce for pesticide residues.

Restaurants and other retail food establishments fall under State law and are regulated by State or local health departments. Typically these regulations require official inspections of specific design features, best food-handling practices, and certification of food handlers. In some places a letter grade or numerical score must be prominently posted following each inspection. In some localities, inspection deficiencies and remedial action are posted on the Internet.

Manufacturing control

HACCP guidelines

Consumer labeling

United Kingdom

Foodstuffs in the UK have one of two labels to indicate the nature of the deterioration of the product and any subsequent health issues. EHO Food Hygiene certification is required to prepare and distribute food. While there is no specified expiry date of such a qualification the changes in legislation it is suggested to update every five years.

Best before indicates a future date beyond which the food product may lose quality in terms of taste or texture amongst others, but does not imply any serious health problems if food is consumed beyond this date (within reasonable limits).

Use by indicates a legal date beyond which it is not permissible to sell a food product (usually one that deteriorates fairly rapidly after production) due to the potential serious nature of consumption of pathogens. Leeway is sometimes provided by producers in stating display until dates so that products are not at their limit of safe consumption on the actual date Stated (this latter is voluntary and not subject to regulatory control). This allows for the variability in production, storage and display methods.

United States

With the exception of infant formula and baby foods which must be withdrawn by their expiration date, Federal law does not require expiration dates. For all other foods, except dairy products in some States, freshness dating is strictly voluntary on the part of manufacturers. In response to consumer demand, perishable foods are typically labelled with a Sell by date. It is up to the consumer to decide how long after the Sell by date a package is usable. Other common dating Statements are Best if used by, Use-by date, Expiration date, Guaranteed fresh <date>, and Pack date.

Australia and New Zealand

Guide to Food Labelling and Other Information Requirements: This guide provides background information on the general labelling requirements in the Code. The information in this guide applies both to food for retail sale and to food for catering purposes. Foods for catering purposes means those foods for use in restaurants, canteens, schools, caterers or self-catering institutions, where food is offered for immediate consumption. Labelling and information requirements in the new Code apply both to food sold or prepared for sale in Australia and New Zealand and food imported into Australia and New Zealand. Warning and Advisory Declarations, Ingredient Labelling, Date Marking, Nutrition Information Requirements, Legibility Requirements for Food Labels, Percentage Labelling, Information Requirements for Foods Exempt from Bearing a Label.

what is Sewage treatment ?

Sewage Treatment is the process of removing contaminants from wastewater, including household sewage and runoff (effluents). It includes physical, chemical, and biological processes to remove physical, chemical and biological contaminants. Its objective is to produce an environmentally safe fluid waste stream (or treated effluent) and a solid waste (or treated sludge) suitable for disposal or reuse (usually as farm fertilizer). With suitable technology, it is possible to re-use sewage effluent for drinking water, although this is usually only done in places with limited water supplies, such as Windhoek and Singapore.

History

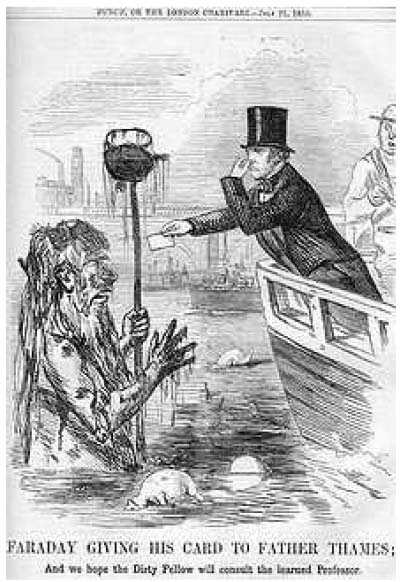

The

Great

Stink of 1858 stimulated research into

the problem of sewage treatment.

In this caricature in The Times,

Michael Faraday reports to Father

Thames

on the State of the river.

Basic sewer systems were used for waste removal in ancient Mesopotamia, where vertical shafts carried the waste away into cesspools. Similar systems existed in the Indus Valley civilization in modern day India and in Ancient Crete and Greece. In the Middle Ages the sewer systems built by the Romans fell into disuse and waste was collected into cesspools that were periodically emptied by workers known as 'rakers' who would often sell it as fertilizer to farmers outside the city.

Modern sewage systems were first built in the mid-nineteenth century as a reaction to the exacerbation of sanitary conditions brought on by heavy industrialization and urbanization. Due to the contaminated water supply, cholera outbreaks occurred in 1832, 1849 and 1855 in London, killing tens of thousands of people. This, combined with the Great Stink of 1858, when the smell of untreated human waste in the River Thames became overpowering, and the report into sanitation reform of the Royal Commissioner Edwin Chadwick, led to the Metropolitan Commission of Sewers appointing Sir Joseph Bazalgette to construct a vast underground sewage system for the safe removal of waste. Contrary to Chadwick's recommendations, Bazalgette's system, and others later built in Continental Europe, did not pump the sewage onto farm land for use as fertilizer; it was simply piped to a natural waterway away from population centres, and pumped back into the environment.

Early attempts

One of the first attempts at diverting sewage for use as a fertilizer in the farm was made by the cotton mill owner James Smith in the 1840s. He experimented with a piped distribution system initially proposed by James Vetch that collected sewage from his factory and pumped it into the outlying farms, and his success was enthusiastically followed by Edwin Chadwick and supported by organic chemist Justus von Liebig.

The idea was officially adopted by the Health of Towns Commission, and various schemes (known as sewage farms) were trialled by different municipalities over the next 50 years. At first, the heavier solids were channeled into ditches on the side of the farm and were covered over when full, but soon flat-bottomed tanks were employed as reservoirs for the sewage; the earliest patent was taken out by William Higgs in 1846 for "tanks or reservoirs in which the contents of sewers and drains from cities, towns and villages are to be collected and the solid animal or vegetable matters therein contained, solidified and dried..." Improvements to the design of the tanks included the introduction of the horizontal-flow tank in the 1850s and the radial-flow tank in 1905.

These tanks had to be manually de-sludged periodically, until the introduction of automatic mechanical de-sludgers in the early 1900s.

The precursor to the modern septic tank was the cesspool in which the water was sealed off to prevent contamination and the solid waste was slowly liquified due to anaerobic action; it was invented by L.H Mouras in France in the 1860s. Donald Cameron, as City Surveyor for Exeter patented an improved version in 1895, which he called a 'septic tank'; septic having the meaning of 'bacterial'. These are still in worldwide use, especially in rural areas unconnected to large-scale sewage systems.

Chemical treatment

It was not until the late 19th century that it became possible to treat the sewage by chemically breaking it down through the use of microorganisms and removing the pollutants. Land treatment was also steadily becoming less feasible, as cities grew and the volume of sewage produced could no longer be absorbed by the farmland on the outskirts.

Sir Edward Frankland conducted experiments at the Sewage Farm in Croydon, England, during the 1870s and was able to demonstrate that filtration of sewage through porous gravel produced a nitrified effluent (the ammonia was converted into nitrate) and that the filter remained unclogged over long periods of time. This established the then revolutionary possibility of biological treatment of sewage using a contact bed to oxidize the waste. This concept was taken up by the chief chemist for the London Metropolitan Board of Works, William Libdin, in 1887:

...in all probability the true way of purifying sewage...will be first to separate the sludge, and then turn into neutral effluent... retain it for a sufficient period, during which time it should be fully aerated, and finally discharge it into the stream in a purified condition. This is indeed what is aimed at and imperfectly accomplished on a sewage farm.

From 1885 to 1891 filters working on this principle were constructed throughout the UK and the idea was also taken up in the US at the Lawrence Experiment Station in Massachusetts, where Frankland's work was confirmed. In 1890 the LES developed a 'trickling filter' that gave a much more reliable performance.

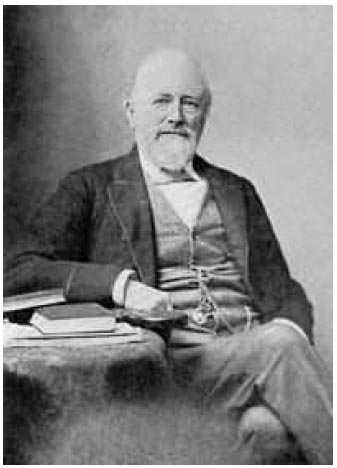

Sir

Edward Frankland, a distinguished

chemist,

who demonstrated the possibility of chemically treating sewage in

the 1870s.

Contact beds were developed in Salford, Manchester and by scientists working for the London City Council in the early 1890s. According to Christopher Hamlin, this was part of a conceptual revolution that replaced the philosophy that saw "sewage purification as the prevention of decomposition with one that tried to facilitate the biological process that destroy sewage naturally."

Contact beds were tanks containing the inert substance, such as stones or slate, that maximized the surface area available for the microbial growth to break down the sewage. The sewage was held in the tank until it was fully decomposed and it was then filtered out into the ground. This method quickly became widespread, especially in the UK, where it was used in Leicester, Sheffield, Manchester and Leeds. The bacterial bed was simultaneously developed by Joseph Corbett as Borough Engineer in Salford and experiments in 1905 showed that his method was superior in that greater volumes of sewage could be purified better for longer periods of time than could be achieved by the contact bed.

The Royal Commission on Sewage Disposal published its eighth report in 1912 that set what became the international standard for sewage discharge into rivers; the '20:30 standard', which allowed 20 mg Biochemical oxygen demand and 30 mg suspended solid per litre.

Activated sludge

The

Davyhulme Sewage Works Laboratory, where

the activated sludge

process was developed in the early 20th century.

The development of secondary treatments to sewage in the early twentieth century led to arguably the single most significant improvement in public health and the environment during the course of the century, the invention of the 'activated sludge' process for the treatment of sewage.

In 1912, Dr. Gilbert Fowler, a scientist at the University of Manchester, observed experiments being conducted at the Lawrence Experiment Station at Massachusetts involving the aeration of sewage in a bottle that had been coated with algae. Fowler's engineering colleagues, Edward Ardern and William Lockett, who were conducting research for the Manchester Corporation Rivers Department at Davyhulme Sewage Works, experimented on treating sewage in a draw-and-fill reactor, which produced a highly treated effluent.

They aerated the waste-water continuously for about a month and were able to achieve a complete nitrification of the sample material. Believing that the sludge had been activated (in a similar manner to activated carbon) the process was named activated sludge.

Their results were published in their seminal 1914 paper, and the first full-scale continuous-flow system was installed at Worcester two years later. In the aftermath of the First World War the new treatment method spread rapidly, especially to the USA, Denmark, Germany and Canada. By the late 1930s, the activated sludge treatment was the predominant process used around the world.

Origins of sewage

Sewage is generated by residential, institutional, commercial and industrial establishments. It includes household waste liquid from toilets, baths, showers, kitchens, sinks and so forth that is disposed of via sewers. In many areas, sewage also includes liquid waste from industry and commerce. The separation and draining of household waste into greywater and blackwater is becoming more common in the developed world, with greywater being permitted to be used for watering plants or recycled for flushing toilets.

Sewage may include stormwater runoff. Sewerage systems capable of handling storm water are known as combined sewer systems. This design was common when urban sewerage systems were first developed, in the late 19th and early 20th centuries.:119 Combined sewers require much larger and more expensive treatment facilities than sanitary sewers. Heavy volumes of storm runoff may overwhelm the sewage treatment system, causing a spill or overflow.

Sanitary sewers are typically much smaller than combined sewers, and they are not designed to transport stormwater. Backups of raw sewage can occur if excessive infiltration/inflow (dilution by stormwater and/or groundwater) is allowed into a sanitary sewer system.

Communities that have urbanized in the mid-20th century or later generally have built separate systems for sewage (sanitary sewers) and stormwater, because precipitation causes widely varying flows, reducing sewage treatment plant efficiency.

As rainfall travels over roofs and the ground, it may pick up various contaminants including soil particles and other sediment, heavy metals, organic compounds, animal waste, and oil and grease.

Some jurisdictions require stormwater to receive some level of treatment before being discharged directly into waterways. Examples of treatment processes used for stormwater include retention basins, wetlands, buried vaults with various kinds of media filters, and vortex separators (to remove coarse solids).

Process overview

Sewage can be treated close to where the sewage is created, a decentralized system (in septic tanks, biofilters or aerobic treatment systems), or be collected and transported by a network of pipes and pump stations to a municipal treatment plant, a centralized system (see sewerage and pipes and infrastructure). Sewage collection and treatment is typically subject to local, State and federal regulations and standards. Industrial sources of sewage often require specialized treatment processes (see Industrial wastewater treatment).

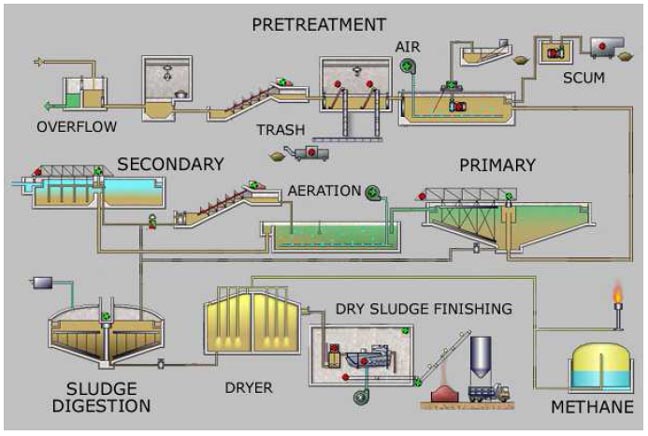

Sewage treatment generally involves three stages, called primary, secondary and tertiary treatment.

-

Primary treatment consists of temporarily holding the sewage in a quiescent basin where heavy solids can settle to the bottom while oil, grease and lighter solids float to the surface. The settled and floating materials are removed and the remaining liquid may be discharged or subjected to secondary treatment.

-

Secondary treatment removes dissolved and suspended biological matter. Secondary treatment is typically performed by indigenous, water-borne micro-organisms in a managed habitat. Secondary treatment may require a separation process to remove the micro-organisms from the treated water prior to discharge or tertiary treatment.

-

Tertiary treatment is sometimes defined as anything more than primary and secondary treatment in order to allow rejection into a highly sensitive or fragile ecosystem (estuaries, low-flow rivers, coral reefs,...). Treated water is sometimes disinfected chemically or physically (for example, by lagoons and microfiltration) prior to discharge into a stream, river, bay, lagoon or wetland, or it can be used for the irrigation of a golf course, green way or park. If it is sufficiently clean, it can also be used for groundwater recharge or agricultural purposes.

Simplified process flow diagram for a typical large-scale treatment plant

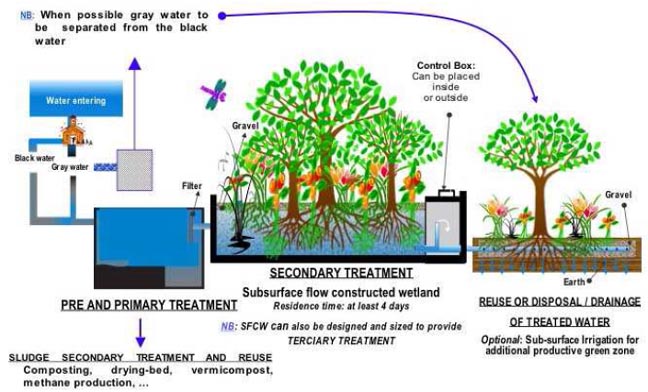

Process flow diagram for a typical

treatment plant via subsurface flow constructed wetlands (SFCW)

Pretreatment

Pretreatment removes all materials that can be easily collected from the raw sewage before they damage or clog the pumps and sewage lines of primary treatment clarifiers. Objects that are commonly removed during pretreatment include trash, tree limbs, leaves, branches, and other large objects.

The influent in sewage water passes through a bar screen to remove all large objects like cans, rags, sticks, plastic packets etc. carried in the sewage stream. This is most commonly done with an automated mechanically raked bar screen in modern plants serving large populations, while in smaller or less modern plants, a manually cleaned screen may be used. The raking action of a mechanical bar screen is typically paced according to the accumulation on the bar screens and/or flow rate. The solids are collected and later disposed in a landfill, or incinerated. Bar screens or mesh screens of varying sizes may be used to optimize solids removal. If gross solids are not removed, they become entrained in pipes and moving parts of the treatment plant, and can cause substantial damage and inefficiency in the process.

Grit removal

Pretreatment may include a sand or grit channel or chamber, where the velocity of the incoming sewage is adjusted to allow the settlement of sand, grit, stones, and broken glass. These particles are removed because they may damage pumps and other equipment. For small sanitary sewer systems, the grit chambers may not be necessary, but grit removal is desirable at larger plants. Grit chambers come in 3 types: horizontal grit chambers, aerated grit chambers and vortex grit chambers.

Flow equalization

Clarifiers and mechanized secondary treatment are more efficient under uniform flow conditions. Equalization basins may be used for temporary storage of diurnal or wet-weather flow peaks. Basins provide a place to temporarily hold incoming sewage during plant maintenance and a means of diluting and distributing batch discharges of toxic or high-strength waste which might otherwise inhibit biological secondary treatment (including portable toilet waste, vehicle holding tanks, and septic tank pumpers). Flow equalization basins require variable discharge control, typically include provisions for bypass and cleaning, and may also include aerators. Cleaning may be easier if the basin is downstream of screening and grit removal.

Fat and grease removal

In some larger plants, fat and grease are removed by passing the sewage through a small tank where skimmers collect the fat floating on the surface. Air blowers in the base of the tank may also be used to help recover the fat as a froth. Many plants, however, use primary clarifiers with mechanical surface skimmers for fat and grease removal.

Primary treatment

In the primary sedimentation stage, sewage flows through large tanks, commonly called "pre-settling basins", "primary sedimentation tanks" or "primary clarifiers". The tanks are used to settle sludge while grease and oils rise to the surface and are skimmed off. Primary settling tanks are usually equipped with mechanically driven scrapers that continually drive the collected sludge towards a hopper in the base of the tank where it is pumped to sludge treatment facilities.:9–11 Grease and oil from the floating material can sometimes be recovered for saponification (soap making).

Secondary treatment

Secondary treatment is designed to substantially degrade the biological content of the sewage which are derived from human waste, food waste, soaps and detergent. The majority of municipal plants treat the settled sewage liquor using aerobic biological processes. To be effective, the biota require both oxygen and food to live. The bacteria and protozoa consume biodegradable soluble organic contaminants (e.g. sugars, fats, organic short-chain carbon molecules, etc.) and bind much of the less soluble fractions into floc. Secondary treatment systems are classified as fixed-film or suspended-growth systems.

Fixed-film or attached growth systems include trickling filters, bio-towers, and rotating biological contactors, where the biomass grows on media and the sewage passes over its surface. The fixed-film principle has further developed into Moving Bed Biofilm Reactors (MBBR), and Integrated Fixed-Film Activated Sludge (IFAS) processes. An MBBR system typically requires smaller footprint than suspended-growth systems.

• Suspended-growth systems include activated sludge, where the biomass is mixed with the sewage and can be operated in a smaller space than trickling filters that treat the same amount of water. However, fixed-film systems are more able to cope with drastic changes in the amount of biological material and can provide higher removal rates for organic material and suspended solids than suspended growth systems.:11–13

• Roughing filters are intended to treat particularly strong or variable organic loads, typically industrial, to allow them to then be treated by conventional secondary treatment processes. Characteristics include filters filled with media to which wastewater is applied. They are designed to allow high hydraulic loading and a high level of aeration. On larger installations, air is forced through the media using blowers. The resultant wastewater is usually within the normal range for conventional treatment processes.

A filter removes a small percentage of the suspended organic matter, while the majority of the organic matter undergoes a change of character, only due to the biological oxidation and nitrification taking place in the filter. With this aerobic oxidation and nitrification, the organic solids are converted into coagulated suspended mass, which is heavier and bulkier, and can settle to the bottom of a tank. The effluent of the filter is therefore passed through a sedimentation tank, called a secondary clarifier, secondary settling tank or humus tank.

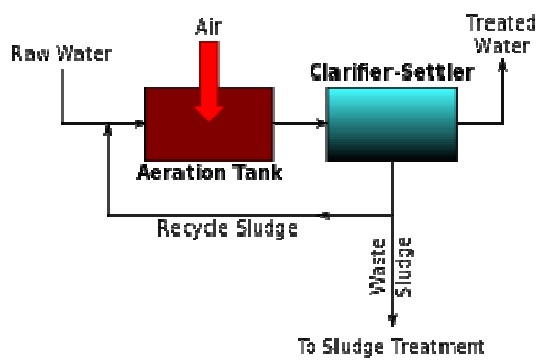

A generalized schematic of an activated sludge process.

Activated sludge

In general, activated sludge plants encompass a variety of mechanisms and processes that use dissolved oxygen to promote the growth of biological floc that substantially removes organic material.

Biological floc, as mentioned above, is an ecosystem of living biota that subsists on nutrients from the inflowing primary settling tank (or clarifier) effluent.

These mostly carbonaceous dissolved solids undergo aeration to be broken down and biologically oxidized or converted to carbon dioxide. Likewise, nitrogenous dissolved solids (amino acids, ammonia, etc.) are also oxidized (=eaten) by the floc to nitrites, nitrates, and, in some processes, to nitrogen gas through denitrification.

While denitrification is encouraged in some treatment processes, in many suspended aeration plants denitrification will impair the settling of the floc and lead to poor quality effluent.

In either case, the settled floc is both recycled to the inflowing primary effluent to regrow, or is partially 'wasted' (or diverted) to solids dewatering, or digesting, and then dewatering.

Interestingly, like most living creatures, activated sludge biota can get sick. This many times takes the form of the floating brown foam, Nocardia.

While this so-called 'sewage fungus' (it isn't really a fungus) is the best known, there are many different fungi and protists that can overpopulate the floc and cause process upsets. Additionally, certain incoming chemical species, such as a heavy pesticide, a heavy metal (e.g.: plating company effluent) load, or extreme pH, can kill the biota of an activated sludge reactor ecosystem. Such problems are tested for, and if caught in time, can be neutralized.

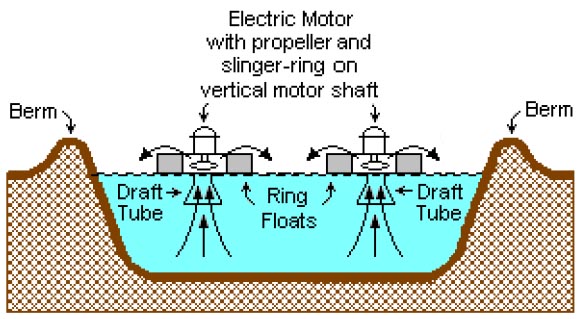

A typical surface-aerated basin (using motor-driven floating

aerators)

Aerobic granular sludge

Activated sludge systems can be transformed into aerobic granular sludge systems (aerobic granulation) which enhance the benefits of activated sludge, like increased biomass retention due to high sludge settlability.

Surface-aerated basins (lagoons)

Many small municipal sewage systems in the United States (1 million gal./day or less) use aerated lagoons.

Most biological oxidation processes for treating industrial wastewaters have in common the use of oxygen (or air) and microbial action. Surface-aerated basins achieve 80 to 90 percent removal of BOD with retention times of 1 to 10 days. The basins may range in depth from 1.5 to 5.0 metres and use motor-driven aerators floating on the surface of the wastewater.

In an aerated basin system, the aerators provide two functions: they transfer air into the basins required by the biological oxidation reactions, and they provide the mixing required for dispersing the air and for contacting the reactants (that is, oxygen, wastewater and microbes). Typically, the floating surface aerators are rated to deliver the amount of air equivalent to 1.8 to 2.7 kg O2/kW·h. However, they do not provide as good mixing as is normally achieved in activated sludge systems and therefore aerated basins do not achieve the same performance level as activated sludge units.

Biological oxidation processes are sensitive to temperature and, between 0 °C and 40 °C, the rate of biological reactions increase with temperature. Most surface aerated vessels operate at between 4 °C and 32 °C.

Filter beds (oxidizing beds)

In older plants and those receiving variable loadings, trickling filter beds are used where the settled sewage liquor is spread onto the surface of a bed made up of coke (carbonized coal), limestone chips or specially fabricated plastic media. Such media must have large surface areas to support the biofilms that form. The liquor is typically distributed through perforated spray arms. The distributed liquor trickles through the bed and is collected in drains at the base. These drains also provide a source of air which percolates up through the bed, keeping it aerobic. Biological films of bacteria, protozoa and fungi form on the media’s surfaces and eat or otherwise reduce the organic content. This biofilm is often grazed by insect larvae, snails, and worms which help maintain an optimal thickness. Overloading of beds increases the thickness of the film leading to clogging of the filter media and ponding on the surface. Recent advances in media and process micro-biology design overcome many issues with trickling filter designs.

Constructed wetlands

Constructed wetlands (can either be surface flow or subsurface flow, horizontal or vertical flow), include engineered reedbeds and belong to the family of phytorestoration and ecotechnologies; they provide a high degree of biological improvement and depending on design, act as a primary, secondary and sometimes tertiary treatment, also see phytoremediation. One example is a small reedbed used to clean the drainage from the elephants' enclosure at Chester Zoo in England; numerous CWs are used to recycle the water of the city of Honfleur in France and numerous other towns in Europe, the US, Asia and Australia. They are known to be highly productive systems as they copy natural wetlands, called the "kidneys of the earth" for their fundamental recycling capacity of the hydrological cycle in the biosphere. Robust and reliable, their treatment capacities improve as time goes by, at the opposite of conventional treatment plants whose machinery ages with time. They are being increasingly used, although adequate and experienced design are more fundamental than for other systems and space limitation may impede their use.

Biological aerated filters

Biological Aerated (or Anoxic) Filter (BAF) or Biofilters combine filtration with biological carbon reduction, nitrification or denitrification. BAF usually includes a reactor filled with a filter media. The media is either in suspension or supported by a gravel layer at the foot of the filter. The dual purpose of this media is to support highly active biomass that is attached to it and to filter suspended solids.

Carbon reduction and ammonia conversion occurs in aerobic mode and sometime achieved in a single reactor while nitrate conversion occurs in anoxic mode. BAF is operated either in upflow or downflow configuration depending on design specified by manufacturer.

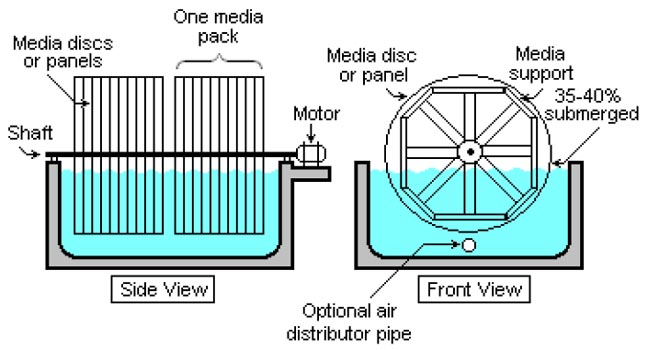

Schematic of a typical rotating biological contactor (RBC).

The treated effluent clarifier/settler is not included in the

diagram.

Rotating biological contactors

Rotating Biological Contactors (RBCs) are mechanical secondary treatment systems, which are robust and capable of withstanding surges in organic load. RBCs were first installed in Germany in 1960 and have since been developed and refined into a reliable operating unit. The rotating disks support the growth of bacteria and micro-organisms present in the sewage, which break down and stabilize organic pollutants. To be successful, micro-organisms need both oxygen to live and food to grow. Oxygen is obtained from the atmosphere as the disks rotate. As the micro-organisms grow, they build up on the media until they are sloughed off due to shear forces provided by the rotating discs in the sewage. Effluent from the RBC is then passed through final clarifiers where the micro-organisms in suspension settle as a sludge. The sludge is withdrawn from the clarifier for further treatment.

A functionally similar biological filtering system has become popular as part of home aquarium filtration and purification. The aquarium water is drawn up out of the tank and then cascaded over a freely spinning corrugated fiber-mesh wheel before passing through a media filter and back into the aquarium. The spinning mesh wheel develops a biofilm coating of microorganisms that feed on the suspended wastes in the aquarium water and are also exposed to the atmosphere as the wheel rotates. This is especially good at removing waste urea and ammonia urinated into the aquarium water by the fish and other animals.

Membrane bioreactors

Membrane bioreactors (MBR) combine activated sludge treatment with a membrane liquid-solid separation process. The membrane component uses low pressure microfiltration or ultrafiltration membranes and eliminates the need for clarification and tertiary filtration. The membranes are typically immersed in the aeration tank; however, some applications utilize a separate membrane tank. One of the key benefits of an MBR system is that it effectively overcomes the limitations associated with poor settling of sludge in conventional activated sludge (CAS) processes. The technology permits bioreactor operation with considerably higher mixed liquor suspended solids (MLSS) concentration than CAS systems, which are limited by sludge settling. The process is typically operated at MLSS in the range of 8,000–12,000 mg/L, while CAS are operated in the range of 2,000–3,000 mg/L. The elevated biomass concentration in the MBR process allows for very effective removal of both soluble and particulate biodegradable materials at higher loading rates. Thus increased sludge retention times, usually exceeding 15 days, ensure complete nitrification even in extremely cold weather.

The cost of building and operating an MBR is often higher than conventional methods of sewage treatment. Membrane filters can be blinded with grease or abraded by suspended grit and lack a clarifier's flexibility to pass peak flows. The technology has become increasingly popular for reliably pretreated waste streams and has gained wider acceptance where infiltration and inflow have been controlled, however, and the life-cycle costs have been steadily decreasing. The small foot print of MBR systems, and the high quality effluent produced, make them particularly useful for water reuse applications.

Secondary sedimentation

Secondary

sedimentation tank at a rural treatment

plant.

The final step in the secondary treatment stage is to settle out the biological floc or filter material through a secondary clarifier and to produce sewage water containing low levels of organic material and suspended matter.

Tertiary treatment

The purpose of tertiary treatment is to provide a final treatment stage to further improve the effluent quality before it is discharged to the receiving environment (sea, river, lake, wet lands, ground, etc.). More than one tertiary treatment process may be used at any treatment plant. If disinfection is practised, it is always the final process. It is also called "effluent polishing."

Filtration

Sand filtration removes much of the residual suspended matter. Filtration over activated carbon, also called carbon adsorption, removes residual toxins.

Lagooning

A sewage treatment plant and lagoon in

Everett, Washington, United States.

Lagooning provides settlement and further biological improvement through storage in large man-made ponds or lagoons. These lagoons are highly aerobic and colonization by native macrophytes, especially reeds, is often encouraged. Small filter feeding invertebrates such as Daphnia and species of Rotifera greatly assist in treatment by removing fine particulates.

Nutrient removal

Wastewater may contain high levels of the nutrients nitrogen and phosphorus. Excessive release to the environment can lead to a buildup of nutrients, called eutrophication, which can in turn encourage the overgrowth of weeds, algae, and cyanobacteria (blue-green algae). This may cause an algal bloom, a rapid growth in the population of algae. The algae numbers are unsustainable and eventually most of them die. The decomposition of the algae by bacteria uses up so much of the oxygen in the water that most or all of the animals die, which creates more organic matter for the bacteria to decompose. In addition to causing deoxygenation, some algal species produce toxins that contaminate drinking water supplies. Different treatment processes are required to remove nitrogen and phosphorus.

Nitrogen removal

Nitrogen is removed through the biological oxidation of nitrogen from ammonia to nitrate (nitrification), followed by denitrification, the reduction of nitrate to nitrogen gas. Nitrogen gas is released to the atmosphere and thus removed from the water.

Nitrification itself is a two-step aerobic process, each step facilitated by a different type of bacteria. The oxidation of ammonia (NH3) to nitrite (NO2−) is most often facilitated by Nitrosomonas spp. ("nitroso" referring to the formation of a nitroso functional group). Nitrite oxidation to nitrate (NO3−), though traditionally believed to be facilitated by Nitrobacter spp. (nitro referring the formation of a nitro functional group), is now known to be facilitated in the environment almost exclusively by Nitrospira spp.

Denitrification requires anoxic conditions to encourage the appropriate biological communities to form. It is facilitated by a wide diversity of bacteria. Sand filters, lagooning and reed beds can all be used to reduce nitrogen, but the activated sludge process (if designed well) can do the job the most easily.:17–18 Since denitrification is the reduction of nitrate to dinitrogen (molecular nitrogen) gas, an electron donor is needed. This can be, depending on the wastewater, organic matter (from faeces), sulfide, or an added donor like methanol. The sludge in the anoxic tanks (denitrification tanks) must be mixed well (mixture of recirculated mixed liquor, return activated sludge [RAS], and raw influent) e.g. by using submersible mixers in order to achieve the desired denitrification.

Sometimes the conversion of toxic ammonia to nitrate alone is referred to as tertiary treatment.

Many sewage treatment plants use centrifugal pumps to transfer the nitrified mixed liquor from the aeration zone to the anoxic zone for denitrification. These pumps are often referred to as Internal Mixed Liquor Recycle (IMLR) pumps.

The bacteria Brocadia anammoxidans, is being researched for its potential in sewage treatment. It can remove nitrogen from waste water. In addition the bacteria can perform the anaerobic oxidation of ammonium and can produce the rocket fuel hydrazine from waste water.

Phosphorus removal

Each person excretes between 200 and 1000 grams of phosphorus annually. Studies of United States sewage in the late 1960s estimated mean per capita contributions of 500 grams in urine and feces, 1000 grams in synthetic detergents, and lesser variable amounts used as corrosion and scale control chemicals in water supplies. Source control via alternative detergent formulations has subsequently reduced the largest contribution, but the content of urine and feces will remain unchanged. Phosphorus removal is important as it is a limiting nutrient for algae growth in many fresh water systems. (For a description of the negative effects of algae, see Nutrient removal). It is also particularly important for water reuse systems where high phosphorus concentrations may lead to fouling of downstream equipment such as reverse osmosis.

Phosphorus can be removed biologically in a process called enhanced biological phosphorus removal. In this process, specific bacteria, called polyphosphate-accumulating organisms (PAOs), are selectively enriched and accumulate large quantities of phosphorus within their cells (up to 20 percent of their mass). When the biomass enriched in these bacteria is separated from the treated water, these biosolids have a high fertilizer value.

Phosphorus removal can also be achieved by chemical precipitation, usually with salts of iron (e.g. ferric chloride), aluminum (e.g. alum), or lime.:18 This may lead to excessive sludge production as hydroxides precipitates and the added chemicals can be expensive. Chemical phosphorus removal requires significantly smaller equipment footprint than biological removal, is easier to operate and is often more reliable than biological phosphorus removal. Another method for phosphorus removal is to use granular laterite.

Once removed, phosphorus, in the form of a phosphate-rich sludge, may be stored in a land fill or resold for use in fertilizer.

Disinfection